Can AI clone an unknown writer's style?

Gabriel Pickard is the hard case. 8 blog posts from 2020, no book, no viral essays. If Noren works on him, the model isn't cheating.

Our Paul Graham case study was the easy case. PG is one of the most-represented writers in LLM training data. The model already knows how he writes.

Gabriel Pickard is the hard case.

He's a software engineer and technical writer who blogs at gabrielpickard.com. He posts on Twitter as @werg. His blog has 10 posts, all from 2020. No book, no viral essays, no meaningful presence in training data.

If Noren can reproduce his voice, the model isn't cheating.

A voice that breaks its own rules

Gabriel writes like an academic who keeps interrupting himself: technical depth followed by casual irreverence, contrarian framings wrapped in self-deprecation, register shifts mid-paragraph with no warning.

"I was a 0.1x programmer trapped in the body of a conversant technologist."

from "I'm not a tinkerer" (2020)

"Well, I have come to tell you that these matters aren't quite the way they seem."

from "Programming languages shouldn't and needn't be Turing complete" (2020)

"If you decide you do desperately need someone to make sweet, sweet Science to your Data; I'm a Sciencer of Data myself, possibly accepting clients."

from "Data Science is dying out" (2020)

His vocabulary mixes academic precision ("tractable language semantics," "primitive recursive functions") with blunt informality ("bla bla bla," parenthetical confessions). Sentence length ranges from 1 word to 45. Titles like "Data Science is dying out" tell you the contrarian framing is structural, not decorative.

The patterns work against each other, and that's what makes it a real test.

The extraction

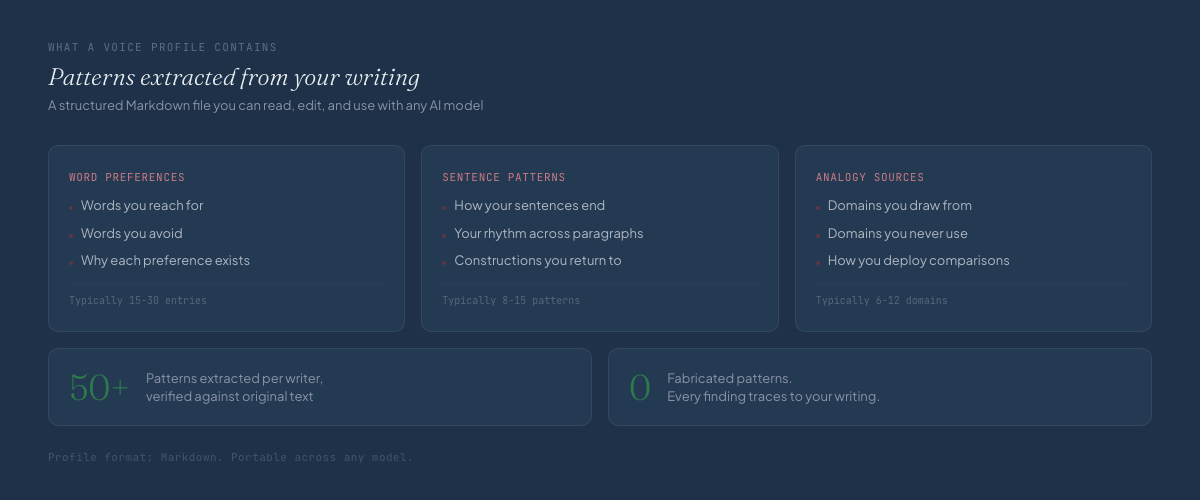

We ran Noren on 10 blog posts and 100 tweets.

| Metric | Value |

|---|---|

| Profile length | 367 lines |

| Patterns extracted | 50+ |

| All findings verified | Yes |

What the profile captured:

- Register breaks: academic formality to casual confession, mid-paragraph

- Extreme sentence length variance within the same piece

- Signature phrases deployed by context, not by position

- Multiple emotional registers, from academic detachment to personal vulnerability

- Distinctive analogy sources drawn from specific, recurring domains

Three generators, one profile

Gabriel wanted to write about a specific idea: AI is fundamentally computation, and the real breakthrough happens when models can loop. He provided notes. Noren generated a blog post and a Twitter thread.

Same profile, same notes, three generators.

Each output was scored on voice fidelity by a blind evaluator. Composite scores:

| Generator | Blog score | Thread score |

|---|---|---|

| Opus | 2.8 | 2.4 |

| Sonnet | 4.0 | 3.6 |

| Gemini | 3.2 | 3.6 |

Scores range from 2.4 to 4.0. The generator matters as much as the profile.

Where Opus went wrong

Opus treated the voice profile as a checklist.

Opus opening:

"Well, I have come to tell you that we've been thinking about artificial intelligence backwards."

That's the first sentence. Gabriel does use "Well, I have come to tell you," but it's a mid-essay move, never an opener. Opus found it in the profile, slotted it into position one. Checkbox ticked.

Sonnet opening:

"There is a framing of large language models that treats them as oracles. You ask a question, the oracle speaks, and that's the transaction. Input, output, done."

No forced signature phrase. Instead, a genuine contrarian reframe: name the dominant narrative precisely, then dismantle it. That's how Gabriel actually writes.

Opus closing:

"So, what are you waiting for? The path from here to there isn't completely clear, but the direction is obvious."

Mechanical deployment, another signature phrase at the expected position.

Sonnet closing:

"That's not a solved problem. It might be the most important unsolved problem in the field right now."

Understated conviction, no forced callback, the idea stated plainly.

Opus missed register breaks entirely. The whole piece maintains a single formal tone with no distinctive vocabulary or parenthetical asides, and generic analogies instead of Gabriel's domains.

The result reads like a competent tech blog post. It could be anyone.

Where Sonnet got it right

Sonnet absorbed the voice patterns and deployed them where they belonged.

Register break (Sonnet):

"Not in the loose, hand-wavy sense where everything a computer does is 'computation.' In the specific, architectural sense: state is being maintained, procedures are being applied over that state, and the results of those procedures feed back into subsequent steps."

A casual dismissal ("loose, hand-wavy") interrupts an otherwise technical argument. Gabriel does this constantly.

Confident Uncertainty (a named pattern from the profile):

"We don't yet know exactly how reliable these self-correction dynamics are, or under what conditions they hold. But dismissing long agentic runs because of compounding entropy is starting to look like the wrong prior. The results speak."

Acknowledges uncertainty, then pushes past it. Sonnet deployed this where the argument called for it, not where a checklist said it should go.

Same voice, different years

Real Gabriel (from "I'm not a tinkerer," 2020):

"I fundamentally confused writing software with yak shaving. I would push myself deeper and deeper into theory and prescient optimizations, until the project just kind of became inert... sat there a while... and then I slowly, slowly, imperceptibly gave up on it."

Generated (Sonnet, "AI is computation," 2026):

"The loops that make computation powerful are being introduced. They're just being introduced at the harness level... The model gets called, produces output, that output modifies some state... and then the model gets called again with updated context."

Both start with a clean statement, then shift into something raw and granular. Short follow-up sentences that land like punctuation. The pattern holds across unrelated topics, six years apart.

Real Gabriel (from "Data Science is dying out," 2020):

"Data Scientists distinguish themselves from Analysts by knowing a bit more about programming. They distinguish themselves from Software Engineers by knowing less about programming."

Generated (Sonnet, "AI is computation," 2026):

"In terms of formal computational complexity, you are somewhere in the neighborhood of context-free. Well beyond a finite state automaton, genuinely impressive, but nowhere near the arbitrary depth that makes Turing-complete computation so powerful."

Same rhetorical move: define something by its position between two other things. Let the positioning make the argument.

What one case study tells you

Noren works for writers the model hasn't memorized. Every voice marker in the generated output came from the profile, not from memorization. Sonnet scored 4.0 on a writer the model has never read. PG scored 4.3 with Opus. The gap is 0.3 points.

Different voices need different generators. Opus won for PG because his voice is controlled and precise, so literal adherence reads as authentic. For Gabriel's looser, register-breaking voice, literal adherence reads as mechanical. Sonnet's internalized interpretation scores higher.

| Voice type | Best generator | Why |

|---|---|---|

| Controlled, precise (PG) | Opus | Literal adherence = authentic |

| Conversational, register-breaking (Gabriel) | Sonnet | Internalized adherence = authentic |

No single generator wins all voices. Noren automatically matches voice types to the right generator.

The pattern

Gabriel Pickard hasn't written longform in six years. Noren extracted his voice from old posts and tweets, then generated writing that sounds like him. Not like a generic tech blogger. Like him.

The profile carries the signal, not the model's memory.

Your voice is already woven through everything you've written. You just need to extract it.

Noren AI's voice extraction is built for this. See how it works.

More case studies

- Paul Graham case study: how Noren handles complex voices

- Why AI writing sounds the same: the convergence problem

- 7 writing patterns AI always misses

FAQ

Can Noren work on writers the model hasn't seen before?

Yes. Gabriel Pickard is unknown to LLM training data. The extraction found his patterns by analyzing his writing directly, not by relying on the model's prior knowledge. The generated output scored 4.0 out of 5 on voice fidelity with Sonnet, comparable to known writers like Paul Graham.

Why do different models score differently on the same voice profile?

Models interpret patterns differently. Opus excels at literal adherence, which works for controlled voices like Graham's. Sonnet internalizes patterns better, which works for looser voices like Pickard's. Noren matches voice types to generators automatically.

How do you evaluate whether the output sounds like the writer?

We score generated output on multiple dimensions, including whether it sounds like the writer and whether signature patterns appear in contextually appropriate places. A generator can place patterns correctly but still sound wrong if it treats them as a checklist instead of internalizing them.

Does the voice profile work across different formats?

Yes. The profile extracted from Gabriel's blog posts and tweets worked for both blog writing and Twitter threads. The same patterns hold across different formats when they're genuinely structural.

How old can writing samples be?

Gabriel's samples were from 2020, six years old. The voice patterns still held. If your voice hasn't fundamentally changed, older samples work. Mix in recent writing to capture any shifts in your actual patterns.

How do you measure if Noren actually captured the voice?

We score generated output across multiple dimensions: does it sound like you, do patterns appear in the right places, does it avoid what you never do, and does it match the format you're writing in. The composite score tells us whether the profile is working.

Your pattern is waiting.

Extract your writing patterns. Generate text that sounds like you.