How to keep your voice in AI-written emails

When colleagues spot the shift in your emails, they don't think 'AI.' They think you're annoyed at them. The trust cost is measurable.

When colleagues spot the shift in your emails, they don't think "AI." They think you're annoyed at them.

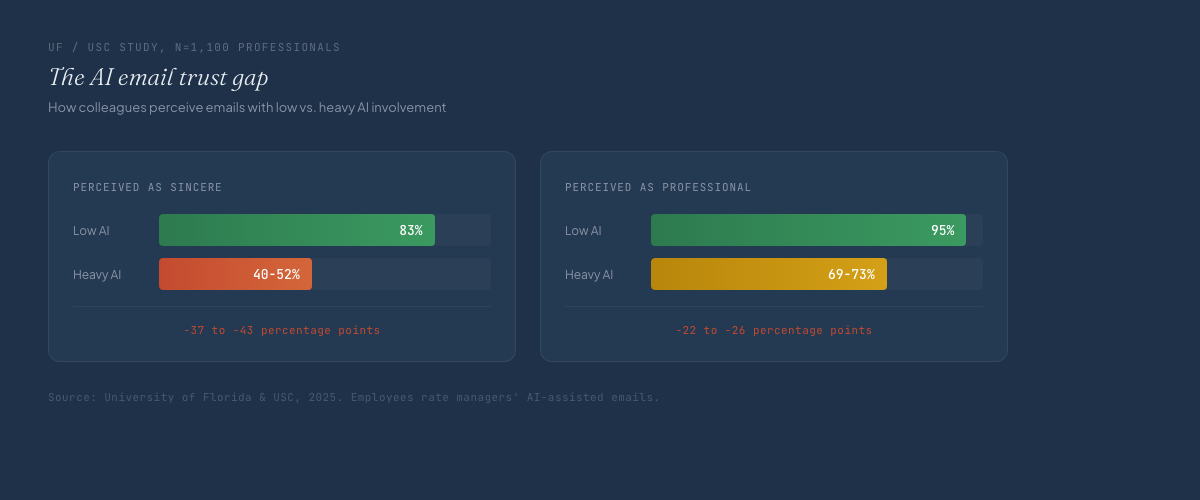

A study from the University of Florida and USC surveyed 1,100 professionals on AI-written workplace communication. When AI involvement was heavy, perceived sincerity dropped from 83% to 40-52%. Perceived professionalism dropped from 95% to 69-73%. The employees couldn't always say what changed, they just trusted the sender less.

Key takeaways:

- Colleagues perceive AI-heavy emails as less sincere. The trust drop shows up immediately, even when content is identical.

- Tone prompts make the problem worse, not better. ChatGPT exaggerates tone adjectives instead of matching your actual voice.

- Your email voice is structural: how you open, how you close, your compression ratio. Default AI generation destroys all of it.

The problem isn't tone

Every guide to AI email style says the same thing: write better tone prompts. Be friendly but professional. Warm but direct. Match your brand voice.

Research from Nielsen Norman Group found the opposite. ChatGPT latches onto tone adjectives and exaggerates them. Tell it "be friendly" and the output drips with enthusiasm you'd never write. Tell it "be professional" and it sounds like a press release.

The same convergence that flattens all AI writing hits email hardest because your recipients have the strongest baseline. Blog readers see your work monthly. Newsletter subscribers see it weekly. Your inbox contacts see you every day, sometimes multiple times.

A journalist at the New York Times ran an experiment: AI for all work emails, one full week. Her colleagues asked if she was annoyed at them. The tone was fine on paper. The voice was wrong. There was a gap between how she actually wrote and how the model wrote, and no amount of tone instruction could close it. Slightly off, impossible to place.

Where AI emails fail

Some AI emails work fine: meeting confirmations, schedule changes, routine updates. The UF/USC study confirms it, employees accept AI involvement in communication where no voice is required.

AI fails in everything else.

Internal updates: Your team knows your voice. They read your emails every day. When a status update sounds different, the read isn't "AI wrote this." It's "something is off with this person." That's the uncanny valley of workplace communication: not obviously fake, just quietly wrong.

Client replies: Your client has six months of messages from you. They know your opening pattern: you lead with acknowledgment before delivering news, or you lead with the outcome and explain after. The model has no way to know which one is yours.

Difficult messages: Rejections, delays, disagreements. You have a structure for these. Maybe you state the decision, explain briefly, then offer next steps. Maybe you lead with context to soften the landing. Getting it wrong costs more than getting it right saves.

Cold outreach: Average cold email response rates dropped from 8.5% to 3.43% since AI tools went mainstream, according to Instantly's 2026 benchmark report. AI standardized what "good" looks like, and every inbox now gets the same structure and the same personalization template.

Signal-personalized emails still get 18-35% response rates, but the signal has to be real. A voice profile helps you sound like a specific person rather than a template, though it doesn't fix the structural homogenization problem on its own.

Why prompts can't fix this

Custom instructions capture what you can describe about your writing. The patterns that make your emails recognizably yours are the ones you've never articulated.

Ask yourself how you close emails: two words, one line, nothing at all?

Do you add a forward-looking sentence before your sign-off? The people on your team already know. They've seen the pattern across hundreds of messages.

How you open, your compression ratio, how you close, your paragraph density. These structural patterns are invisible to you but obvious to anyone reading regularly.

Research from the Writing DNA experiment found that paragraph structure distribution (correlation: 0.81) is a stronger author identifier than vocabulary (0.61). The architecture of your prose is what makes your writing recognizably yours, not the word choices.

A system prompt can't capture what you've never consciously noticed about yourself.

What actually works

The fix starts upstream: extract your voice from your actual emails before you generate with AI. Collect 15-20 emails that represent how you actually write, mixing types like replies, asks, updates, introductions, and difficult messages.

Don't pick the polished ones, pick the typical ones.

The extraction process separates what's core to your voice from what shifts by format. Your voice in email is not identical to your voice in long-form. The identity holds, but pacing, paragraph length, and structural habits shift.

A proper extraction produces a format-specific layer on top of your core profile. When you generate an email, the model loads both. The draft that comes back sounds like an email you'd write. Review time shrinks from a rewrite to a read-through.

What about Shortwave and Superhuman?

Shortwave's Ghostwriter and Superhuman's AI both learn from your sent folder. They're the closest competitors to this approach. They learn implicitly: zero effort, automatic maintenance. You can't see what they extracted or correct false patterns. If the system picks up a habit from a bad week of writing, you're stuck with it. If you switch tools, the learning vanishes.

A voice profile is a Markdown file you can read, edit, and take anywhere. It works across email clients, chat apps, and any model you choose. You can delete lines that don't represent you.

Noren's Pro tier includes a living profile that updates automatically as your writing evolves. New emails feed back into the profile, so you get the transparency of a readable file with the automatic maintenance of an implicit system.

The ROI calculation

Professionals spend 28% of their workweek on email, roughly 11-15 hours. At 5.5 minutes per draft, editing overhead on AI-generated emails compounds fast.

When every AI draft needs a rewrite to sound like you, you're not saving time. You're relocating it.

The fix is making the first draft sound right so the review is a scan, not a surgery. Noren AI's voice extraction handles your email voice alongside your other formats.

Custom instructions describe your writing. A voice profile captures it, and the gap between the two is every email that reads slightly off.

FAQ

How do I make AI emails sound like me?

Extract your writing patterns from 15-20 representative emails and build a voice profile. The profile captures your opening habits, compression ratio, sign-off structure, and word choices. Use it as a system prompt when generating email drafts. Tone prompts alone don't work. Research shows AI exaggerates tone adjectives rather than matching your actual style.

Can colleagues tell when an email is AI-written?

Often yes, though they may not articulate it as "AI." A University of Florida and USC study of 1,100 professionals found that perceived sincerity drops from 83% to 40-52% when AI involvement is heavy. The effect shows up as reduced trust, not as explicit detection.

How many emails do I need for voice extraction?

15-20 across different types: replies, asks, updates, introductions, and difficult messages. Mix recent emails with older ones to capture stable patterns. Format variety matters more than volume.

Does AI work for cold outreach emails?

Average cold email response rates have dropped from 8.5% to 3.43% since AI tools went mainstream, because AI standardized the format. Signal-personalized emails with genuine voice still get 18-35% response rates. The differentiator is sounding like a specific person, not like a template.

Your pattern is waiting.

Extract your writing patterns. Generate text that sounds like you.